Lightweight language models have moved from being niche research experiments to becoming essential tools for engineers, startups, and businesses that need fast, reliable AI without the heavy infrastructure of giant models. The Best Lightweight LLMs in 2026 are no longer just “smaller versions” of large systems. They are purpose-built machines engineered for short latency, low power consumption, and efficient reasoning.

Whether you’re building a coding assistant, deploying AI on the edge, or simply trying to get more performance from mid-range hardware, lightweight models are now capable of tasks that earlier required massive GPU clusters. Among the Top Lightweight LLMs in 2026, three models stand out consistently because of their real-world performance: Mistral 7B, Phi-3, and Claude Haiku.

Before diving deep, here’s a quick snapshot of these systems and what makes each one special.

Key Lightweight LLMs to Know in 2026 (Quick Pointers)

1. Mistral 7B

- Known for one of the fastest inference speeds in the 7B class

- Excels in edge deployments, chat systems, multilingual text, and rapid coding tasks

- Ideal for teams that need consistent accuracy with minimal GPU cost

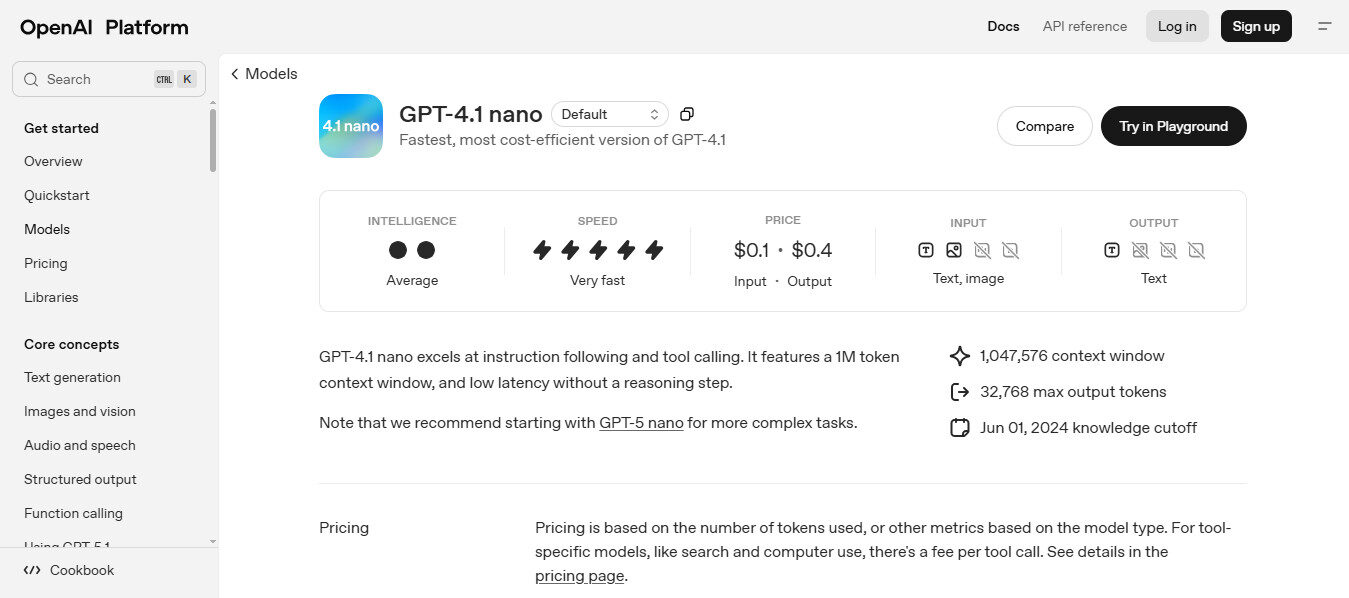

2. OpenAI’s GPT-4 Nano

- Built for extreme efficiency, especially mobile and embedded systems

- Strong micro-model reasoning, ideal for on-device intelligence and low-latency apps

3. Gemma 2B & Gemma 7B (Google)

- Clean instruction following with minimal drift

- Highly optimized for consumer hardware and local development environments

4. Meta’s Llama 3 Series

- Large open-source ecosystem with excellent fine-tuning support

- Performs well in multilingual tasks, generative workflows, and lightweight code generation

5. Microsoft Phi-3 Series

- Runs smoothly on mid-range CPUs and consumer GPUs

- Compact but strong reasoning ability

- Ideal for enterprise tools, local deployments, and Best Lightweight LLM Models for Coding

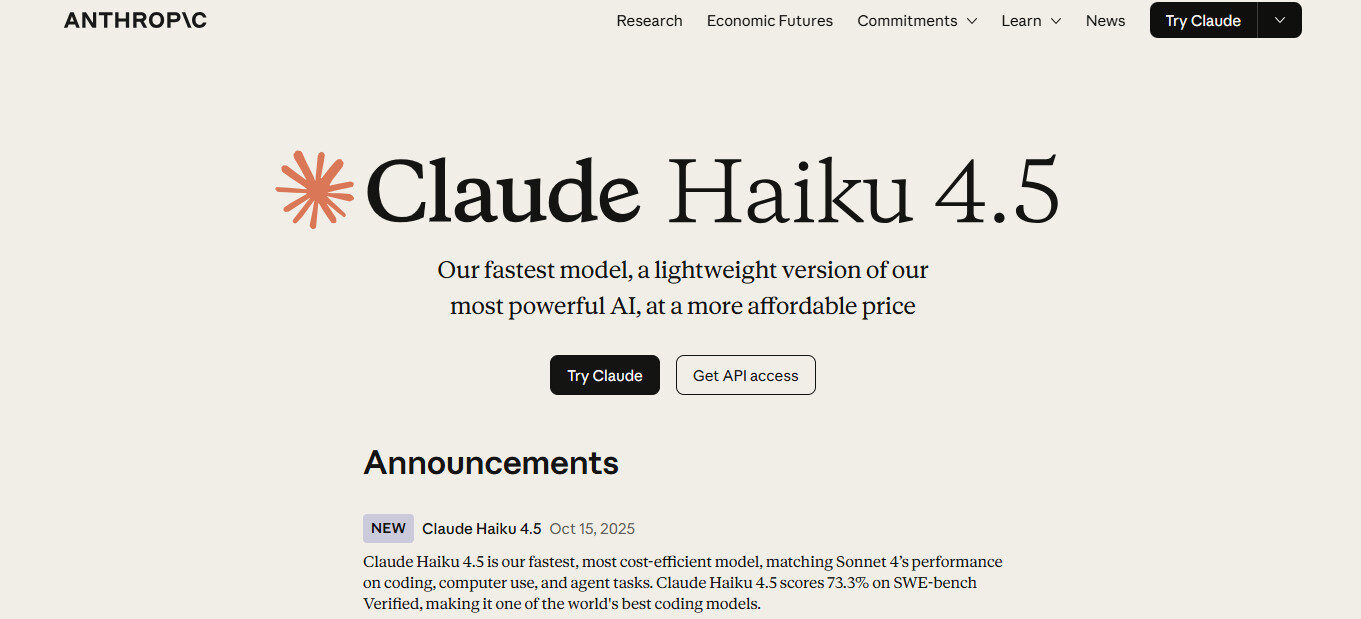

6. Claude Haiku

- Fastest model in the Claude 3 family

- Exceptional for lightweight reasoning, Q&A systems, and cost-efficient automation

- Highly reliable for customer support, summaries, and quick interpretation tasks

What Are Lightweight LLMs?

Lightweight LLMs are compact language models designed to deliver reliable reasoning and natural text generation without the heavy computational demands of large-scale systems. Instead of aiming for maximum size or complexity, these models focus on efficiency. They run smoothly on everyday hardware, including mid-range laptops, mobile devices, and edge environments where memory and power are limited. Their purpose is practical: give developers and businesses the ability to integrate AI into tools, apps, and workflows without relying on expensive servers or constant cloud access.

Despite their smaller size, modern lightweight models can understand instructions, summarize information, assist with coding, and support conversational tasks with surprising accuracy. They’re especially useful for offline applications, privacy-sensitive operations, and products that require fast response times. In many cases, they offer the most balanced mix of speed, cost, and adaptability for real-world deployment.

Why Lightweight LLMs Matter in 2026

As models expand beyond hundreds of billions of parameters, cost and accessibility have become critical challenges. Businesses increasingly prioritize:

- Lower operational costs

- Reduced inference time

- Local deployment capabilities

- Better privacy and data control

- Energy-efficient performance

This shift explains why search demand for Best Lightweight LLMs in 2026 has increased significantly. These models now serve as the backbone for chatbots, coding agents, support tools, IoT systems, and multi-agent applications that need reliability without complexity.

Deep Dive into the Best Lightweight LLMs in 2026

Below is a detailed breakdown of each model, how they work, and where they excel. The goal here is not to repeat marketing claims but to offer a grounded, technical view based on current industry usage and test data.

Mistral 7B continues to dominate the discussion around the Best Lightweight LLMs in 2026. The model’s efficiencies are not accidental; they stem from careful architectural decisions, tight training data curation, and extensive instruction tuning.

- Runs efficiently on a single consumer GPU

- Delivers stable reasoning without drifting or hallucinating heavily

- Has strong multilingual support, especially for European languages

- Frequently used as a base model for fine-tuned enterprise chatbots

Mistral’s tokenizer and training choices also help it outperform older models of similar size on coding, translation, and domain-specific writing. Many developers rely on Mistral for Best Lightweight LLM Models for Coding tasks because its step-by-step outputs are clean and consistent.

- Customer support automation

- Local RAG systems

- Browser-based assistants

- Low-latency coding helpers

- Workflow orchestration tools

In short, Mistral remains a safe, predictable option when speed and stability matter.

2. OpenAI’s GPT-4 Nano: A New Standard for Mobile AI

GPT-4 Nano marks OpenAI’s push toward ultra-efficient models that still maintain a strong level of reasoning reliability. Unlike larger versions in the GPT-4 family, Nano is tuned specifically for devices where power limits, thermal restrictions, and memory ceilings create real barriers for deployment.

What makes GPT-4 Nano important

- It’s designed for phones, tablets, wearables, and IoT systems

- Offers tight response control, making it suitable for safety-first applications

- Delivers strong reasoning despite its small footprint

- Ideal for apps that require privacy because processing can remain on-device

Developers working on consumer applications prefer Nano because it allows real-time computation with very low energy consumption. Its performance on short reasoning tasks, routing queries, and personal-assistant workflows makes it one of the quiet but important competitors in the Best Lightweight LLMs in 2026 landscape.

3. Gemma 2B & Gemma 7B: Google’s Focus on Clean, Compact Intelligence

Gemma models were built with one goal: deliver clear, controlled, predictable output without requiring heavy hardware. The two main variants, 2B and 7B, serve different audiences. The 2B version is exceptionally light and perfect for entry-level experimentation or mobile AI. The 7B version delivers stronger reasoning for research, automation, and coding tasks.

Why Gemma models stand out

- Very strong instruction alignment compared to other models of similar size

- Clean sentence structure with minimal over-generation

- Works smoothly on mid-tier GPUs and mid-range laptops

- Good choice for local RAG, educational tools, and lightweight analysis workloads

Gemma has quickly become a favorite among developers who want a balance between small size and reliable instruction following. Its efficiency helps it blend into workflows that previously depended heavily on much larger systems.

5. Meta’s Llama 3: The Open-Source Backbone of 2026

Meta’s Llama 3 series adds an important dimension to the list of Best Lightweight LLMs in 2026 because it offers both scalability and community support. The smaller variants provide strong baseline reasoning, while the broader ecosystem supports fine-tuning, multimodal use, and custom domain adaptation.

Why Llama 3 remains influential

- Massive open-source community makes integration easy

- Fine-tuning support is stronger than most proprietary models

- Good multilingual capabilities and structured writing

- Often chosen for corporate internal deployments where customization matters

Llama 3’s biggest advantage is how easily developers can shape it into domain-specific models. Industries that rely on custom language systems—healthcare, finance, logistics, and policy research—benefit from the flexibility and transparency it offers.

5. Phi-3: Microsoft’s Breakthrough in Compact Reasoning

Phi-3 is one of the most talked-about models in the landscape of Top Lightweight LLMs in 2026. It’s designed to run on consumer-grade laptops, mid-tier GPUs, and even some mobile hardware without major compromises in quality.

Why Phi-3 became a turning point

- Heavy use of synthetic reasoning datasets

- Excellent chain-of-thought performance for its size

- Very low VRAM requirements

- Available in multiple variants tuned for chat, coding, and reasoning

Phi-3 consistently ranks as one of the Best Lightweight LLM Models for Coding because it understands instructions cleanly and outputs structurally sound code.

Favored use cases

- Offline personal assistants

- Classroom educational tools

- Scientific calculators and reasoning bots

- Edge-deployable industry applications

Its compact footprint makes it one of the most accessible models of the year.

6. Claude Haiku: Speed Above Everything

Claude Haiku is the fastest and most economical member of the Claude family. While it’s small compared to Sonnet or Opus, it delivers remarkable performance in tasks that require speed without sacrificing reliability.

What makes Haiku special

- Lightning-fast inference speeds

- Excellent factual accuracy on short tasks

- Surprisingly strong logical reasoning despite size

- Adaptability to retrieval-augmented scenarios

If your goal is to build a support bot, recommendation engine, or lightweight automation agent, Haiku delivers results instantly and consistently. It remains a top pick among businesses searching for the Best Lightweight LLMs in 2026 because it combines speed with high-quality responses.

Comparison: Best Lightweight LLMs in 2026

| Model | Strength | Best Use Case | Hardware Needs |

| Mistral 7B | Balanced speed and reasoning | Chatbots, RAG, coding | Low |

| GPT-4 Nano | High accuracy for its size | Mobile AI, embedded apps | Very Low |

| Gemma 2B / 7B | Clean outputs, strong instruction tuning | Local tools, research | Low |

| Llama 3 | Open ecosystem | Fine-tuning multilingual apps | Medium |

| Phi-3 | Best hardware efficiency | Edge deployments, apps | Very low |

| Claude Haiku | Fastest execution | Support systems, routing | Cloud API |

Choosing the Right Lightweight Model

Selecting from the Top Lightweight LLMs in 2026 depends on what you prioritize:

If speed matters most:

Claude Haiku

If coding accuracy is key:

Phi-3 or Mistral 7B (both among the Best Lightweight LLM Models for Coding)

If you need cloud-scale throughput:

Mixtral 8x7B

If you want local deployment:

Phi-3 or Mistral 7B

If cost is the main concern:

Phi-3 (runs even on low-spec devices) The ideal model depends heavily on context, dataset quality, and whether fine-tuning is part of your workflow.

If cost and hardware efficiency are the main concerns:

Phi-3 or Gemma 2B (They operate reliably even on entry-level GPUs and CPUs.)

If you want the best open-source ecosystem:

Llama 3 (Strong community support and excellent for fine-tuned enterprise solutions.)

If you’re building mobile or embedded applications:

GPT-4 Nano or Gemma 2B (These are engineered specifically for minimal memory and power use.)

What Makes a Good Lightweight LLM in 2026?

A truly effective lightweight model should meet four core standards:

1. Low latency

Inference speed is a major differentiator. The best lightweight models respond in milliseconds.

2. Efficient memory usage

Models like Phi-3 can run within 4–8 GB VRAM, making them ideal for on-device AI.

3. Adaptability

Fine-tuning capabilities, compatibility with RAG, and strong instruction-following are necessary in practical environments.

4. Reliability

Lightweight does not mean low quality. The top models answer consistently and maintain factual correctness on short prompts.

Where Lightweight LLMs Are Used Today

The surge in adoption across 2026 is driven by real operational needs:

Coding agents

- Many developers use Mistral, Phi-3, or Haiku to generate, analyze, and debug code.

Consumer AI devices

- Smart notebooks, AR glasses, and embedded sensors rely on models like Phi-3.

Enterprise workflows

- Lightweight models are deployed in CRM systems, internal search tools, and document automation.

Customer support

- Haiku dominates this segment because of its rapid response and low cost.

Education

- Locally run models help with tutoring, STEM explanations, and interactive study tools.

- The versatility of the Best Lightweight LLMs in 2026 shows how quickly the AI world has shifted toward efficiency.

Final Thoughts

The competition among lightweight models is stronger than ever. Compact architectures are advancing fast enough to challenge the capabilities of last year’s large proprietary systems. Whether you’re optimising cost, expecting fast interaction, or running AI on small devices, you have solid choices across open-source and proprietary ecosystems.

As long as you clearly define your workload and performance expectations, you will find the right fit within today’s landscape of the Best Lightweight LLMs in 2026.

FAQs

What are lightweight LLMs and why do they matter in 2026?

They’re smaller AI models built for speed and low hardware use. In 2026 they matter because companies want fast, affordable, and private AI that can run locally instead of relying on large cloud systems.

Which lightweight LLM works best for coding?

Mistral 7B and Microsoft Phi-3 stand out. They generate clean code, follow instructions well, and run smoothly on mid-range hardware.

What’s the top lightweight model for mobile or embedded devices?

GPT-4 Nano is the strongest option. It’s built for tight power and memory limits, making it ideal for phones, wearables, and IoT apps.

Which lightweight LLM is the most budget-friendly?

Phi-3 offers the best value. It performs well on low-spec CPUs and GPUs, helping teams keep costs down without losing quality.

How do I choose the right lightweight model?

Pick based on your priority: Haiku for speed, Phi-3 or Gemma 2B for low-cost local use, Mistral 7B for balanced accuracy, GPT-4 Nano for mobile apps, and Llama 3 if you want open-source flexibility.